|

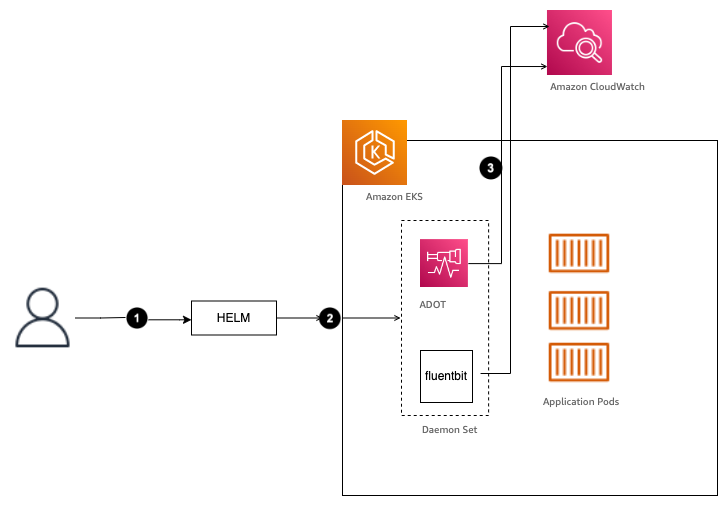

Since all Pods should have the same collection of DAG files, it is recommended to create just one PV In this scenario, your CI/CD pipeline should update the DAG files in the You can store your DAG files on an external volume, and mount this volume into the relevant Pods Embedding in Docker container Mounting Persistent Volume There are several ways to deploy your DAG files when running Airflow on Kubernetes.ģ. Helm install -namespace "airflow" -name "airflow" incubator/airflow Deploying your DAGs bloomberg/airflow This repository contains a Kubernetes manifest that may be inspected for inspiration.įor installation instructions, follow the readme.This PR is to get it merged into the kubernetes/charts repository.

This is where the current development happens (on the airflow branch).Originally forked from mumoshu/kube-airflow which seems abandoned. There is some work in this area, but it is not completely finished yet. Next you need to create some Kubernetes manifests, or a Helm chart. The image has an entrypoint script that allows the container to fulfill the role of scheduler, webserver, flower, or worker. This means that you do not need to build your own image when you are first starting out. This is a robust Docker image that is up to date and also has an automated build set up, pushing images to Dockerhub. Most people seem to use puckel/docker-airflow. In that case, you’ll probably want Flower (a UI for Celery) and you need a queue, like RabbitMQ or Redis.įirst thing you need is a Docker image that packages Airflow. If you want to distribute workers, you may want to use the CeleryExecutor. The main components are the scheduler, the webserver, and workers. Running Airflow on KubernetesĪirflow is implemented in a modular way. I think eventually this can replace the CeleryExecutor for many installations.ĭocumentation for the new Operator can be found here. So your workers end up hosting the combination of all dependencies of all your DAGs. Previously, if your task requires some python library or other dependency, you’ll need to install that on the workers. The cool thing about this Operator will be that you can define custom Docker images per task. In the next release of Airflow (1.10), a new Operator will be introduced that leads to a better, native integration of Airflow with Kubernetes. KubernetesPodOperator (coming in 1.10)Ī subset of functionality will be released earlier, according to AIRFLOW-1517. This is still work in progress so deploying it should probably not be done in production. Progress can be tracked in Jira ( AIRFLOW-1314).ĭevelopment is being done in a fork of Airflow at bloomberg/airflow. The wiki contains a discussion about what this will look like, though the pages haven’t been updated in a while. Work is in progress that should lead to native support by Airflow for scheduling jobs on Kubernetes. The Helm chart mentioned below does this.

However, you can also deploy your Celery workers on Kubernetes. The simplest way to achieve this right now, is by using the kubectl commandline utility (in a BashOperator) or the python sdk. The reason that I make this distinction is that you typically need to perform some different steps for each scenario. And of course you can run them in Kubernetes and deploy to Kubernetes as well. Or you can host them on Kubernetes, but deploy somewhere else, like on a VM. you can use Jenkins or Gitlab (buildservers) on a VM, but use them to deploy on Kubernetes. You can actually replace Airflow with X, and you will see this pattern all the time.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed